Introduction

High Performance Computing at Lewis & Clark

On the Lewis & Clark campus, High Performance Computing and Data Science efforts are supported in part by the Watzek Library's Digital Initiatives office. We have supported HPC both in the classroom and in a departmental research setting. Our support includes hardware administration, software support, and occasional algorithmic support, as well as other assistance.

What is High Performance Computing?

High Performance Computing, or HPC is the use of super computers and parallel processing techniques for solving complex computational problems. HPC technology focuses on developing parallel processing algorithms and systems by incorporating both administration and parallel computational techniques. High-performance computing is typically used for solving advanced problems and performing research activities through computer modeling, simulation and analysis. HPC systems have the ability to deliver sustained performance through the concurrent use of computing resources. While this technical description of HPC is very specific, it's not actually that readable or understandable, so let's parse it a little bit:

Most people believe that High-Performance Computing (HPC) is defined by the architecture of the computer it runs on. In standard computing, one physical computer with one or more cores is used to carry out a task. All of these cores can access a pool of Shared Memory. Shared memory makes it trivial for two or more programs or parts of a single program to understand what other programs are doing. On the other hand, most high performance systems are clusters which consist of many separate multicore computers which are all connected over a network. In this configuration, the individual parts of a program running on separate physical machines are not easily able to communicate without connecting over the network. The following diagram represents a cluster of multicore computers working as a single cluster:

Because of the dependence on networking, programming for HPC environments requires special considerations that normal programming does not.

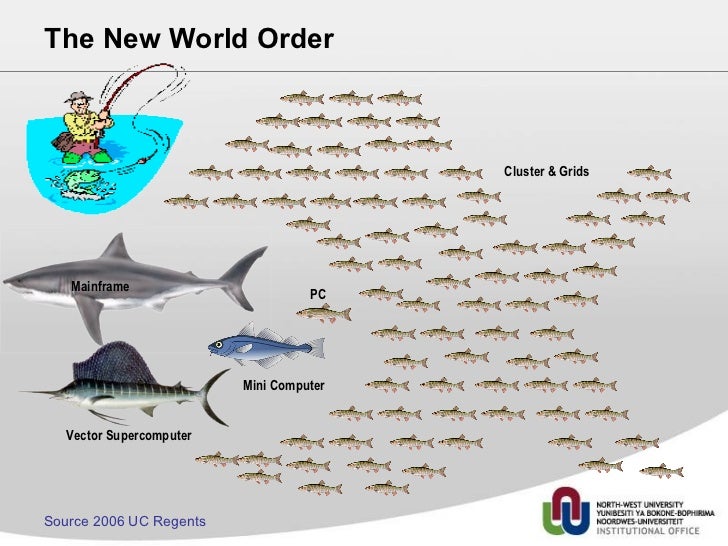

In friendly terms, to attack a problem with HPC techniques, you break down the problem into many smaller problems, ideally all of which can be attacked at once. The following (fairly silly) graphic sums it up nicely:

What Resources are Available?

Lewis & Clark Operates an HPC system, which we call BLT. BLT is a small, linux-based cluster computer, which is comprised of three worker nodes (bacon, lettuce and tomato), and one multi-purpose login, filesystem, and external data manager. More BLT Tech specs are available at https://watzek.github.io/BLTdocs/.

BLT is a 144-core Intel Xeon based high-performance cluster computer operated at Lewis & Clark College. BLT provides the ability for members of the L&C community to perform large-scale computations and simulations, analyze large amounts of data, perform computation-based science research, and more.

BLT is currently open for business! If you have a project you believe could benefit from being run on an HPC system like BLT, please get in touch! We offer real time support, software installation and help with data management. We like to start with a meeting, either virtual or in person, and we try to offer custom solutions based on researchers' needs.

What is Data Science?

... under construction ...

Who Can Collaborate?

We are looking for collaborators who have a need for an HPC environment in which to perform data- or compute-intensive research, data science, big data, memory dependent, high throughput, or other high-performance computing related tasks, especially people who do not otherwise have access to HPC resources. If you are interested in collaborating, please read the "How can I collaborate?" below!

How can I Collaborate?

To get the ball rolling, please get in touch with Jeremy McWilliams, either by emailing jeremym@lclark.edu or blt-cluster@lclark.edu. After that, we like to have a meeting, either in person or virtually, to discuss what your specific needs will be. We offer real-time help over slack, and are willing to ensure our system has the software packages and modules you will need to use. Access to our computational resources is currently free.

What Kinds of Things Have we done?

Most of the work we have done thus far falls into one of two categories: coursework and research.

We have supported computational work in the following courses:

- BIO 407 - Venom biology... in Australia!

- Students performed bioinformatic genomic computing, remotely accessing our system over the internet from Australia.

- CS 171 - Computer Science I

- Students learn the basics of logging into a high performance system, submitting basic parallel jobs, and reading and writing parallel code. This allows students to learn about HPC, even in an entry-level CS course.

- CS 211 - Cybersecurity

- Students utilize computational and HPC resources for computationally intensive cybersecurity tasks, including cryptography and password cracking.

- CS 369 - AI & Machine Learning

- Students design and train AI and ML models at high speeds using HPC resources. This allows for class projects to be of a meaningful scale.

- CS 467 - Advanced Graphics

- Students utilize HPC resources to accelerate rendering of graphics.

- CS 495 - Topics: Numerical Analysis (spr 2019)

- Students use HPC resources to visualize and compute numerical approximations for many functions, including partial and ordinary differential equations.

- CS 495 - Topics: Parallel & HPC (spr 2019)

- Students learn the fundamentals of HPC, parallel, and concurrent programming. They learn to operate and utilize clusters and various HPC and parallelism resources.

- PHYS 380 - Computational Physics

- Students utilize HPC resources and apply HPC techniques to the problems of physics. They perform monte carlo simulations, numerical integration, and more mathematical and computer-scientific problems.

- PHYS 491 - Honors Research

- This is a student-designed research course, in at least one iteration of which, a student has used HPC resources to solve computational physics problems.

Additionally, the HPC resources have also been utilized by researchers. Some of it is summarized below:

- Designing an HPC workflow optimization system (CS)

- Designing an accessibility and ease-of-use platform for HPC systems (CS)

- Measuring cloud cover and designing climate models using nerual networks (CS/ ENVS)

- Strong Nuclear Force and Quantum Entanglement Binding Entropy (PHYS)

- Designing and writing an HPC Open Education Resource (CS)

- Bioinformatics Practica (BIO/CS)